Docker's MCP Catalog and Toolkit: What It Is and Why It Matters

So Docker has shipped its MCP Toolkit. If you've been wiring up Claude or Cursor to talk to outside tools and felt like you were duct-taping JSON config files together at 2 am, you're not the only one. The Model Context Protocol crowd has grown fast, and the plumbing has stayed messy. Docker's answer: package the servers as containers, list them in a catalogue, and put a single gateway in front of the lot.

Let me explain what's actually new, what it does, and where it fits.

The mess MCP was creating

Model Context Protocol is the open spec that lets a model talk to external tools, files, and APIs in a structured way. It works. But every server tends to have its own install steps, its own runtime, and its own quirks. Want GitHub, Atlassian, and a search tool wired into your editor? You'd be cloning three repos, juggling Node and Python versions, and stuffing API keys into config files that almost certainly end up in someone's screenshare by accident.

Docker's pitch is straightforward: containers already solved this for web apps a decade ago, so do the same for MCP servers (Docker Docs).

What the MCP Toolkit actually is

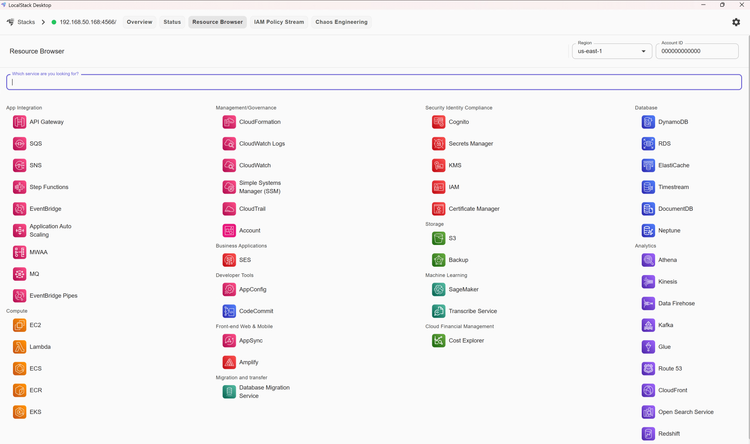

The MCP Toolkit is a management surface inside Docker Desktop (4.62 and up) that lets you browse, launch, and configure containerised MCP servers, then point your AI client at them (Docker Docs). Underneath, every server is a regular container image, so you don't need Node, Python, or some random binary on your host to run them.

The Toolkit comes with three pieces worth knowing about:

- The Catalog: a curated list of over 200 verified MCP servers, packaged as signed images with SBOMs, provenance, and security updates (Docker Docs).

- The Gateway: a single endpoint your client connects to, which fronts every server you've enabled.

- Profiles: named bundles of servers and their config, so your "data analysis" stack doesn't bleed into your "infra ops" stack.

I'll come back to each.

The Catalog: a registry, but for AI tools

If Docker Hub is where you go for a Postgres image, the MCP Catalog is where you go for a YouTube transcript reader, a Brave Search server, a Notion connector, an Atlassian bridge, and so on. Images live under the mcp/ namespace, are signed by Docker, and ship with a Software Bill of Materials so you can see exactly what's inside (Docker Docs).

The supply-chain story matters here. MCP servers run with privileges your model gets to use, which means a poisoned image is a really bad day. Signed images, provenance checks, and Scout integration push that risk closer to the floor.

The Gateway: one socket to rule them all

Here's where it gets clever. Instead of every client opening a connection to every server, the Gateway sits in the middle and aggregates them (Docker blog). Your client (Claude Desktop, Cursor, VS Code, Windsurf, Goose, continue.dev, and OpenAI's Codex CLI) connects once. The Gateway:

- starts and stops the underlying containers,

- proxies trusted remote servers when you'd rather not run them locally,

- handles OAuth flows so you don't paste tokens into config files,

- and mounts secrets only into the container that needs them.

You can run more than one Gateway, each with a different profile attached. So a "chess tools" Gateway and a "cloud ops" Gateway can sit side by side without stepping on each other.

Profiles and the March 2026 templates

Profiles are just named collections: pick a few servers, set their config, save the bundle. They're version-controllable, which is the bit teams care about. Compose-first means a profile can move from a laptop into Cloud Run or Azure Container Apps without much rework (Docker blog).

In March 2026, Docker Desktop 4.67 added Profile Templates: a starter-pack idea applied to MCP, surfaced through the Profiles tab and the docker mcp profile create CLI (Doolpa for the release date). Pick a card on the Profiles tab, get a pre-configured bundle for web work (think GitHub plus Playwright), data analysis, or cloud infra, and you're running in seconds rather than fiddling with five separate setup guides.

Security, but the sort that doesn't make you cry

A few things make the security model nicer than the DIY route:

- Image signing and provenance on everything in the catalogue.

- OAuth handled in-browser, with credentials stored by the Toolkit rather than scattered across YAML.

- Per-container secret mounts so your GitHub token isn't visible to the Notion server.

- Scoped tokens for services like GitHub, so the Toolkit hands the model the narrowest credential it can get away with rather than a blanket personal access token (Docker blog).

None of this is magic. It's the same supply-chain hygiene Docker already pushed for application images, just pointed at a new audience.

Using it from the CLI

If you live in your terminal, the Toolkit ships with a docker mcp command set so you can list servers, enable them, and start the Gateway without ever opening Desktop's UI (Docker Docs). Handy for scripts and CI, and frankly nicer when you're already in flow.

A first-time run looks roughly like this:

- Update Docker Desktop to 4.62 or later.

- Open the MCP Toolkit panel, browse the Catalog, click into a server you want.

- Authenticate where needed (OAuth pops a browser window).

- Add the server to a profile.

- Start the Gateway.

- Point Claude, Cursor, or VS Code at it.

- Run

mcp listfrom the client and watch the tools appear.

That's the loop. No virtualenvs, no Node version managers, no "works on my machine" tickets.

Wiring it into Codex

Codex deserves its own note because Docker has done the integration work properly. OpenAI's Codex CLI and IDE extension share an MCP config at ~/.codex/config.toml, and Docker ships a one-shot helper that fills it in for you (Docker blog):

docker mcp client configure codex

Run that once, and any server you've enabled in your Toolkit profile shows up inside Codex on the next launch. The same trick works for Claude Desktop, Cursor, and the others, but the Codex story is the freshest one and the easiest to recommend if you're already in the OpenAI camp (OpenAI Developers).

Who actually benefits

A few honest answers:

- Solo developers who've been dabbling with MCP and want to try ten servers without breaking their machine.

- Teams that need shared, reproducible tool stacks. Compose files give you a real source of truth.

- Security-conscious shops that won't run unsigned binaries from random GitHub repos. Now they don't have to.

- Anyone moving from prototype to production. The same profile that runs locally can ship to Cloud Run, Azure, or wherever your container platform lives.

If you're not using MCP yet, the Toolkit lowers the trial cost so much that there's no real reason not to poke at it for an afternoon.

Where it goes from here

Docker is treating MCP the way it treated the early days of containers: turn the messy install dance into a one-click experience, build a catalogue, then add governance on top. The Gateway being open source means the community can extend it, which is the bit that'll matter long-term (Docker blog).

Worth a look if you've spent any time wiring AI tools by hand. You'll get that hour back, plus a few more.

Sources

- Docker MCP Toolkit | Docker Docs

- Docker MCP Catalog and Toolkit | Docker Docs

- Docker MCP Catalog | Docker Docs

- MCP Profiles | Docker Docs

- Use MCP Toolkit from the CLI | Docker Docs

- Introducing Docker MCP Catalog and Toolkit | Docker blog

- AI Guide to the Galaxy: MCP Toolkit and Gateway, Explained | Docker blog

- Connect Codex to MCP Servers via Docker MCP Toolkit | Docker blog

- Model Context Protocol - Codex | OpenAI Developers

- Docker Desktop 4.67: MCP Profile Templates | Doolpa

Member discussion