Run AWS on Your Laptop: A 9-Part LocalStack Build Series (Part 0 — Setup)

Welcome to a 9-part hands-on series on building real things with LocalStack. We'll build a small but real backend on your laptop — photo uploads, image processing, a URL shortener with JWT auth, async job queues, scheduled workflows, encrypted secrets, the lot — all running locally, all without an AWS bill. New parts land as I finish them. By the end you'll have a working stack provisioned with Terraform and tested in GitHub Actions, plus the kind of feel for AWS that only comes from actually wiring it up rather than reading docs.

This first article is the "start here" piece. By the end of the next 30 minutes, you'll have LocalStack running on your laptop, the AWS CLI pointed at the right endpoint, and a smoke-tested S3 bucket — ready for the next part.

What you'll need: Docker (Docker Desktop on Mac/Windows, or Docker Engine on Linux), about 30 minutes, and a free LocalStack account.

What is LocalStack, in one paragraph

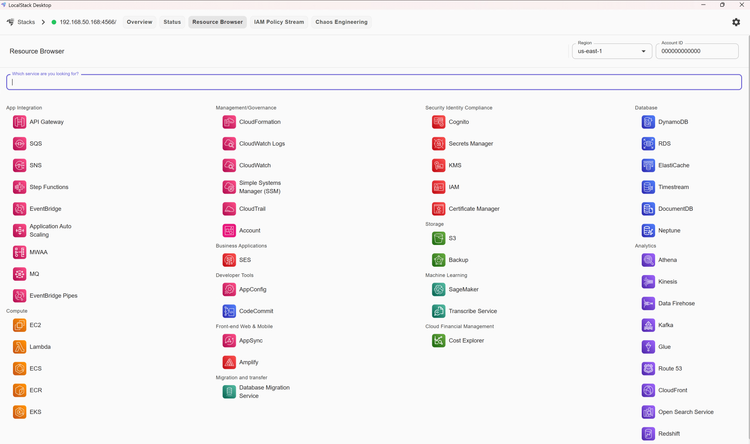

LocalStack is an emulator that runs a fake AWS on your laptop. You point your AWS CLI or SDK at http://localhost:4566 instead of the real AWS endpoints, and the rest of your code stays the same. Most of the AWS services you'd actually use day to day are covered — S3, Lambda, DynamoDB, SQS, SNS, EventBridge, API Gateway, Step Functions, Secrets Manager, KMS, and more. The exact count varies by plan and release; the free Hobby plan advertises 30+ services, paid tiers more. If you want the longer conceptual intro, my older post LocalStack: Run AWS Services Locally for Free is the place. It pre-dates the March 2026 packaging change (which we'll handle in a moment) but the model still holds.

What we're building over the series

One coherent project, threaded through the next nine weeks. By the end of week nine you'll have:

- A photo upload flow (S3 + presigned URLs) where the browser uploads directly to a bucket

- An image processing pipeline (Lambda fired by S3 events) that creates thumbnails

- A URL shortener API (DynamoDB + API Gateway + Lambda + JWT auth) so users can share short links

- A background job queue (SQS + SNS + dead-letter queue) for things like welcome emails

- A scheduled workflow (EventBridge + Step Functions) running nightly cleanup and reprocessing

- Encrypted secrets (Secrets Manager + KMS) for API keys and third-party credentials

- The whole stack provisioned with Terraform (via

tflocal) and tested in GitHub Actions CI

Same architectural patterns most small SaaS apps use. The same patterns you'd use against real AWS — the only difference is the endpoint URL.

Who this series is for

You'll get the most out of it if:

- You write code in Node, Python, Go, or any language with an AWS SDK

- You've used AWS before, even briefly, but don't want to keep paying to learn

- You'd like to write integration tests that actually exercise S3, Lambda, and DynamoDB

- You're a homelabber who wants the AWS patterns running on your own hardware

- You're sizing up AWS for a side project and want to prototype before committing

If you've never opened the AWS console, the older intro post is the friendlier starting point.

Heads up: the 2026 packaging change

Quick footnote because it'll catch you out otherwise. In the March 2026 release (2026.03.0), LocalStack consolidated its two Docker images into one and folded the previous free Community Edition into a free Hobby plan for non-commercial use. The image is still free — you just register an account and pass an auth token to the container. Step 1 below walks through it.

Step 1: Grab your auth token

Sign up at app.localstack.cloud, confirm the email, and copy the auth token from your account page. Add it to your shell config so every future shell picks it up:

# ~/.zshrc or ~/.bashrc

export LOCALSTACK_AUTH_TOKEN=ls-...your-token-here...

Reload your shell (source ~/.zshrc) before moving on.

Step 2: Set up the project folder

Everything in this series will live under one folder. Create it now:

mkdir -p ~/projects/localstack-series

cd ~/projects/localstack-series

We'll add subdirectories per article as the series goes on (part1-s3-uploader/, part2-dynamodb/, and so on).

Step 3: The docker-compose.yml

Drop this in the project root. It's the file you'll keep coming back to:

# docker-compose.yml

services:

localstack:

container_name: localstack

image: localstack/localstack:latest

ports:

- "127.0.0.1:4566:4566"

- "127.0.0.1:4510-4559:4510-4559"

environment:

- LOCALSTACK_AUTH_TOKEN=${LOCALSTACK_AUTH_TOKEN}

- DEBUG=0

- PERSISTENCE=1

- SERVICES=s3,lambda,dynamodb,sqs,sns,events,iam,sts,apigateway,secretsmanager,kms,stepfunctions,logs

volumes:

- "./init:/etc/localstack/init"

- "./.localstack:/var/lib/localstack"

- "/var/run/docker.sock:/var/run/docker.sock"

A few choices worth explaining:

localstack/localstack:latest— the simplest "always current" pin. The image moves with each monthly release; if you'd rather lock to a specific version once you're up and running, swaplatestfor the full version tag (2026.04.0or whatever's current at app.localstack.cloud).- Port

4566is the LocalStack Gateway — every emulated AWS service is reachable through it. The4510-4559range is the external services port range that some services use for their own endpoints. SERVICESrestricts LocalStack to only the listed services — anything not in the list is disabled. Useful for keeping the memory footprint small and for failing loudly if your code accidentally calls something you didn't intend to test. By default LocalStack lazy-loads services on first use, so unused ones cost nothing — but disabling them entirely is cleaner.PERSISTENCE=1plus the.localstackvolume is the configuration that saves state between restarts on the paid Base and Ultimate plans. On the free Hobby tier the env var is silently unsupported — state wipes on everydocker compose down. I've left the variable in the compose file because it's harmless when ignored and starts working automatically the moment you upgrade. For Hobby readers, plan to recreate resources at the start of each article in the series — none of it is heavy, but it's worth knowing up front.- The Docker socket mount is needed for Lambda — LocalStack runs Lambda functions in sibling Docker containers, so it needs to talk to the host's Docker daemon. Without this mount, Lambda invocations fail with "Docker not available".

Add a .gitignore while you're here (the init/ folder is not ignored — it'll hold our checkpoint scripts and we want those committed):

.localstack/

*.zip

node_modules/

__pycache__/

Step 4: Bring it up

docker compose up -d

docker compose logs -f localstack

You're looking for output that ends with:

LocalStack version: 2026.04.x

LocalStack build date: 2026-...

Ready.

Hit Ctrl+C to stop following logs — the container keeps running. If "Ready." doesn't appear within 30 seconds, jump to the troubleshooting section a bit further down.

Step 5: Install the AWS CLI and point it at LocalStack

The official AWS CLI talks to LocalStack the same way it talks to real AWS — you just point it at LocalStack's endpoint URL.

Install it if you don't already have it:

brew install awscli # macOS

sudo apt install awscli # Debian/Ubuntu

# Windows: installer from https://aws.amazon.com/cli/

Configure it once for LocalStack. Add these to your shell profile (~/.zshrc, ~/.bashrc, or PowerShell $PROFILE):

export AWS_ENDPOINT_URL=http://localhost:4566

export AWS_ACCESS_KEY_ID=test

export AWS_SECRET_ACCESS_KEY=test

export AWS_DEFAULT_REGION=us-east-1

PowerShell equivalent:

# Clear any persistent AWS_PROFILE so the env vars below take precedence

Remove-Item Env:AWS_PROFILE -ErrorAction SilentlyContinue

$env:AWS_ENDPOINT_URL = "http://localhost:4566"

$env:AWS_ACCESS_KEY_ID = "test"

$env:AWS_SECRET_ACCESS_KEY = "test"

$env:AWS_DEFAULT_REGION = "us-east-1"

The Remove-Item Env:AWS_PROFILE line matters on Windows: if you've previously set AWS_PROFILE=production (or anything else) at the user level, the AWS CLI will keep using that profile and ignore the env vars we just set. Clearing it first makes the LocalStack config win.

Reload your shell. Now aws s3 ls calls LocalStack instead of real AWS — same CLI, different endpoint.

Or use a dedicated localstack AWS profile (cleaner for anyone with day-job AWS)

The LocalStack AWS CLI integration docs recommend splitting the config across the two AWS files — keys in ~/.aws/credentials, region/output/endpoint in ~/.aws/config:

# ~/.aws/credentials

[localstack]

aws_access_key_id = test

aws_secret_access_key = test

# ~/.aws/config

[profile localstack]

region = us-east-1

output = json

endpoint_url = http://localhost.localstack.cloud:4566

Then either pin per command (aws --profile localstack s3 ls) or set AWS_PROFILE=localstack for the project folder. Your real-AWS profiles stay untouched.

The hostname localhost.localstack.cloud is the one LocalStack recommends — it resolves to 127.0.0.1 from the host and to the LocalStack container from inside Docker networks, so the same config works everywhere.

Optional shortcuts for awslocal

If you want to type awslocal s3 ls instead of aws s3 ls, two options — pick whichever is less hassle for you.

Option A — install the Python wrapper:

awslocal is a small Python package that sets the endpoint URL and placeholder credentials for you.

pipx install awscli-local # or: pip install awscli-local

Option B — define a shell function (no install):

Drop into ~/.zshrc or ~/.bashrc:

awslocal() {

AWS_PROFILE= \

AWS_ACCESS_KEY_ID=test \

AWS_SECRET_ACCESS_KEY=test \

AWS_DEFAULT_REGION=us-east-1 \

aws --endpoint-url=http://localhost:4566 "$@"

}

The empty AWS_PROFILE= makes sure your day-job profile doesn't leak in. PowerShell equivalent:

function awslocal {

$env:AWS_PROFILE = ""

$env:AWS_ACCESS_KEY_ID = "test"

$env:AWS_SECRET_ACCESS_KEY = "test"

$env:AWS_DEFAULT_REGION = "us-east-1"

aws --endpoint-url=http://localhost:4566 @args

}

This series uses awslocal in code samples because it's shorter. Every command works the same way with plain aws once you've exported the env vars above. Use whichever you prefer.

Step 6: Smoke test

Create your first bucket:

aws s3 mb s3://hello-localstack

You should see:

make_bucket: hello-localstack

Confirm it's there:

aws s3 ls

2026-05-03 12:34:56 hello-localstack

Put a file in it:

echo "hello from localstack" > hello.txt

aws s3 cp hello.txt s3://hello-localstack/

aws s3 ls s3://hello-localstack/

If your file shows up, LocalStack is healthy and you're ready for Week 1.

(If you went with the awslocal shortcut from Step 5, every aws here works the same way as awslocal — interchangeable.)

Step 7: Set up the checkpoint mechanism (init hooks)

State doesn't survive a restart on the free Hobby tier — persistence is paid-only. The good news: there's a free workaround that turns out to be even better for a tutorial series. Init hooks let LocalStack run shell scripts every time the container starts. Instead of saving state, we save a script that recreates state in seconds.

Init hooks are listed as "Included in Plans: Hobby, Base, Ultimate", so they work on the free tier.

The pattern across this series:

- Each article ends with a small "save this as a checkpoint" script

- Drop the scripts into your

init/ready.d/folder, named with a numeric prefix per article (01-part1-s3.sh,02-part2-dynamodb.sh, ...) - Every

docker compose upre-runs them in order — your resources rebuild themselves - Want to jump in at Part 4 without doing the previous ones? Add scripts

01through04to yourinit/ready.d/and you're set up. They're additive

The mount in our compose file (./init:/etc/localstack/init) is already there. Make the directory:

mkdir -p init/ready.d

Try it with a tiny example. Save this as init/ready.d/00-part0-smoke.sh:

#!/usr/bin/env bash

aws --profile localstack s3 mb s3://hello-localstack 2>/dev/null || true

echo "[bootstrap] part 0 — smoke bucket ready"

Make it executable:

chmod +x init/ready.d/00-part0-smoke.sh

Restart LocalStack to pick up the new mount and the script:

docker compose down

docker compose up -d

sleep 5

Confirm:

aws s3 ls

# 2026-05-03 12:34:56 hello-localstack

The bucket came back automatically. The 2>/dev/null || true makes the command idempotent — if a future run finds the bucket already there, the error is swallowed and the script keeps going.

That's the whole pattern. Each part of the series will give you one or two more lines to paste into the same folder.

Caveat for Lambda steps: Lambda functions need a .zip of your code, which is awkward to deploy through a one-off shell script. Articles that introduce Lambda (Parts 3 and 4) will walk through deployment manually, and the checkpoint scripts will skip the Lambda parts — only the supporting resources (buckets, tables, queues, IAM roles) get bootstrapped automatically.

Common pitfalls

A short list of things that have caught readers before:

- "Auth token not set" or services refusing to start. Re-export

LOCALSTACK_AUTH_TOKENand rundocker compose up -d --force-recreate. The variable has to be in the shell that runsdocker compose, not just in the container. - Port 4566 already in use. Another LocalStack container is still running. Run

docker ps -a | grep localstackand kill the duplicate. aws: Could not connect to the endpoint URL. TheAWS_ENDPOINT_URLenv var isn't set in this shell. Re-source your profile (source ~/.zshrc/. $PROFILE) or re-export the four env vars from Step 5.- AWS CLI says "Unable to locate credentials". Same root cause — the four env vars from Step 5 aren't in scope. Re-source the profile or set them inline for one command. If you went with the

awslocalshortcut,pipx ensurepath(then a new shell) usually fixes "command not found" for that route. - State doesn't survive a restart. Expected on the free Hobby tier (persistence is paid-only). Use the init-hooks pattern from Step 7 to recreate resources automatically.

- Init script doesn't run. Check that the file is executable (

chmod +x) and thatinit/ready.d/is mounted at/etc/localstack/init/ready.dinside the container.docker compose exec localstack ls /etc/localstack/init/ready.dconfirms. - Lambda invocations hang. The Docker socket mount is missing. Add

/var/run/docker.sock:/var/run/docker.sockand recreate the container. - Slow first request after a cold start. Normal — LocalStack lazy-loads services on first request by default. The second call is instant.

Cleanup commands worth knowing

# Stop the container, keep state

docker compose stop

# Stop and remove the container, keep state on disk

docker compose down

# Stop, remove the container, AND wipe all state

docker compose down && rm -rf .localstack

The third one is the "I've got it into a weird state, start fresh" command. You'll want it.

A small habit worth picking up

Drop these in your shell config so you never start a session forgetting the token:

# ~/.zshrc or ~/.bashrc

ls-up() { (cd ~/projects/localstack-series && docker compose up -d); }

ls-down() { (cd ~/projects/localstack-series && docker compose down); }

ls-logs() { (cd ~/projects/localstack-series && docker compose logs -f localstack); }

Then ls-up, ls-logs, ls-down from anywhere on the machine.

What we'll wire up next

You've got LocalStack running, the AWS CLI pointed at it, a smoke-tested S3 bucket, and the init-hooks checkpoint pattern in place. The next part uses this setup for something real: a tiny photo uploader where the browser uploads files directly to S3 via a presigned URL — the same pattern Slack, Imgur, and most SaaS apps use for user-generated content. By the end of Part 1, you'll have a working endpoint and a single-page upload form you'll keep around for the rest of the series.

The full series

- Part 0 — Start here: series intro and installing LocalStack (this article)

- Part 1 — S3 locally: buckets, presigned URLs, and a tiny photo uploader (next)

- Part 2 — DynamoDB locally: building a URL shortener data layer

- Part 3 — Lambda + S3 events: an image thumbnailer pipeline

- Part 4 — API Gateway + Lambda + JWT auth: a real HTTP API

- Part 5 — SQS + SNS: a background job queue with a dead-letter queue

- Part 6 — EventBridge + Step Functions: orchestrating a photo-processing workflow

- Part 7 — Secrets Manager + KMS: handling secrets and encryption locally

- Part 8 — Terraform (tflocal) + GitHub Actions: integration tests against LocalStack

Sources

- LocalStack getting started — installation and Docker Compose example

- LocalStack pricing and plan comparison

- LocalStack 2026.03.0 release notes — auth token rollout

- LocalStack 2026.04.0 release notes — current version, App Inspector, lstk CLI

- LocalStack AWS CLI integration — recommended profile setup

- LocalStack configuration reference (

SERVICES,EAGER_SERVICE_LOADING) - LocalStack persistence docs (Base/Ultimate only)

- LocalStack Lambda docs (Docker socket required)

- LocalStack init hooks (free on Hobby) — the checkpoint pattern

- awscli-local on GitHub

Member discussion